ChatGPT and Claude are extraordinary tools. They are not antitrust analysts.

The same is true of Gemini, Grok, and every other general-purpose LLM. General reasoning keeps improving. Merger antitrust analysis still needs specialized knowledge, repeatable structure, and verification that a chat session was never designed to provide.

Taimet exists because the gap between what these models can produce in isolation and what this work actually requires is still enormous. We use frontier models inside a purpose-built system, the same way the best developer tools wrap models in serious engineering.

The models are excellent.

The missing piece is the harness.

We use these models ourselves. Claude's extended reasoning, OpenAI's deep research features, Gemini's long context: they are genuinely useful for the right problems. None of that is in dispute.

The past few years have also made something else obvious. Some of the most valuable AI products are not raw chat, they are tools built around models: Claude Code, Cursor, Windsurf, vertical SaaS that routes tasks, validates output, and keeps work inside constraints a conversation alone cannot enforce.

The companies producing durable value are wrapping frontier models in pipelines, tests, prompts, and product design that the model cannot supply by itself.

That is Taimet for merger antitrust analysis.

The frontier models are an ingredient. The architecture is where enforcement experience, verification, and repeatable structure live.

A couple years ago, the fear was that improved LLMs would eventually make specialized software unnecessary. What we are now seeing is that the opposite is true: the platforms surrounding the models are more important than ever.

Confidently wrong

The most dangerous failure mode is not a visible mistake. It is the plausible-sounding answer that never trips your skepticism, then compounds through everything you build on top of it.

Example: fabricated statute (documented against major models)

Model-style answer

“The Michigan Antitrust Reform Act (MARA) contains an explicit parens patriae provision. MCL 445.778 authorizes the Michigan Attorney General to bring civil actions …”

What the statute actually does

Michigan's Antitrust Reform Act does not expressly provide parens patriae authority in the way the model claimed. The cited section does not say what the answer asserts it does. That is a falsifiable hallucination with a citation-shaped fig leaf, which is exactly the pattern that poisons downstream analysis.

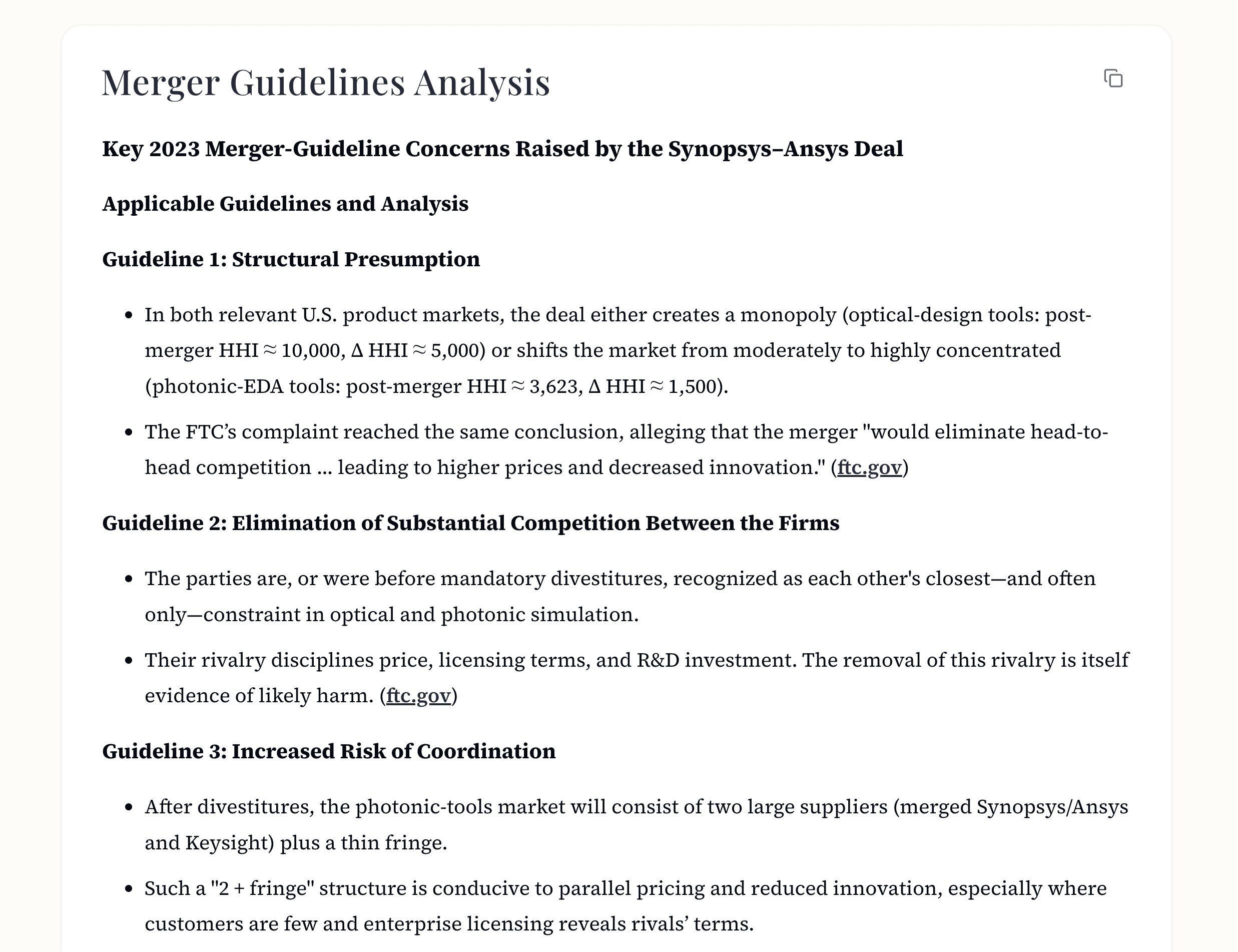

Wrong merger guidelines

Ask which merger guidelines apply, and a general-purpose model frequently reaches for the previous framework, not the 2023 guidelines the FTC adopted. Even when it cites 2023, it often misses critical specifics. That is not a minor formatting issue. It mis-frames the entire legal standard.

Invented lists of state statutes

Ask which states maintain a healthcare premerger notification law and you will often get a confident, wrong enumeration. That is lethal in a practice area where being off by one jurisdiction changes legal risk.

Vague, hedge-filled “analysis”

Ask about a vertical trend in a niche industry (alfalfa seed, for example) and you may receive something that reads like insight: consolidation, supply-chain themes, caveats about mixed structures. It is difficult to fact-check because it never pins itself to a claim. It also captures none of what a practitioner would actually need to know about real events in that market. That failure mode does not trigger alarm bells. It is still useless for a high-stakes screen.

These are not exotic edge cases. They are what happens when a system has no enforcement-grade map of the law, no verification layer, and no obligation to stay inside checkable claims.

A jagged frontier, not a smooth curve

Today's LLMs are uneven. They are exceptional at some tasks and surprisingly brittle at others. Each family of models has a different shape: what elicits strong performance in Claude may not work in the latest ChatGPT release, and vice versa. Prompts drift with version bumps.

For most people, that landscape is impossible to monitor. For antitrust work, guessing wrong is not a productivity annoyance. It is liability.

Taimet is designed for that reality. Tasks are routed to the models that handle them best. Prompting is tuned per provider and per role. Known failure modes are avoided rather than discovered in production. Multiple providers run in parallel or in sequence depending on what the stage requires.

When a base model improves, Taimet benefits: we are not competing with frontier research. We are putting it inside a system meant to extract reliable output from it. The relationship is symbiotic.

Why this matters for practitioners

- A single long chat thread is one lens, one memory, one pass. It cannot replicate a multi-agent research and verification architecture.

- “Try again with a better prompt” does not scale when the error is subtle, legal, and expensive.

- Expert work needs structural guardrails, not conversational luck.

A pipeline,

not a prompt.

A typical ChatGPT or Claude session is one model, one thread, one pass. A typical Taimet analysis runs more than a hundred coordinated LLM responses across providers, with expert-tuned prompts and verification at stages where a single hallucination would otherwise poison the file.

Taimet deliberately pairs models in tension: one pass generates, another interrogates. Claims are tested, weak support is surfaced, and reasoning is cross-checked before output is finalized. The version you read has already been challenged, not just polished.

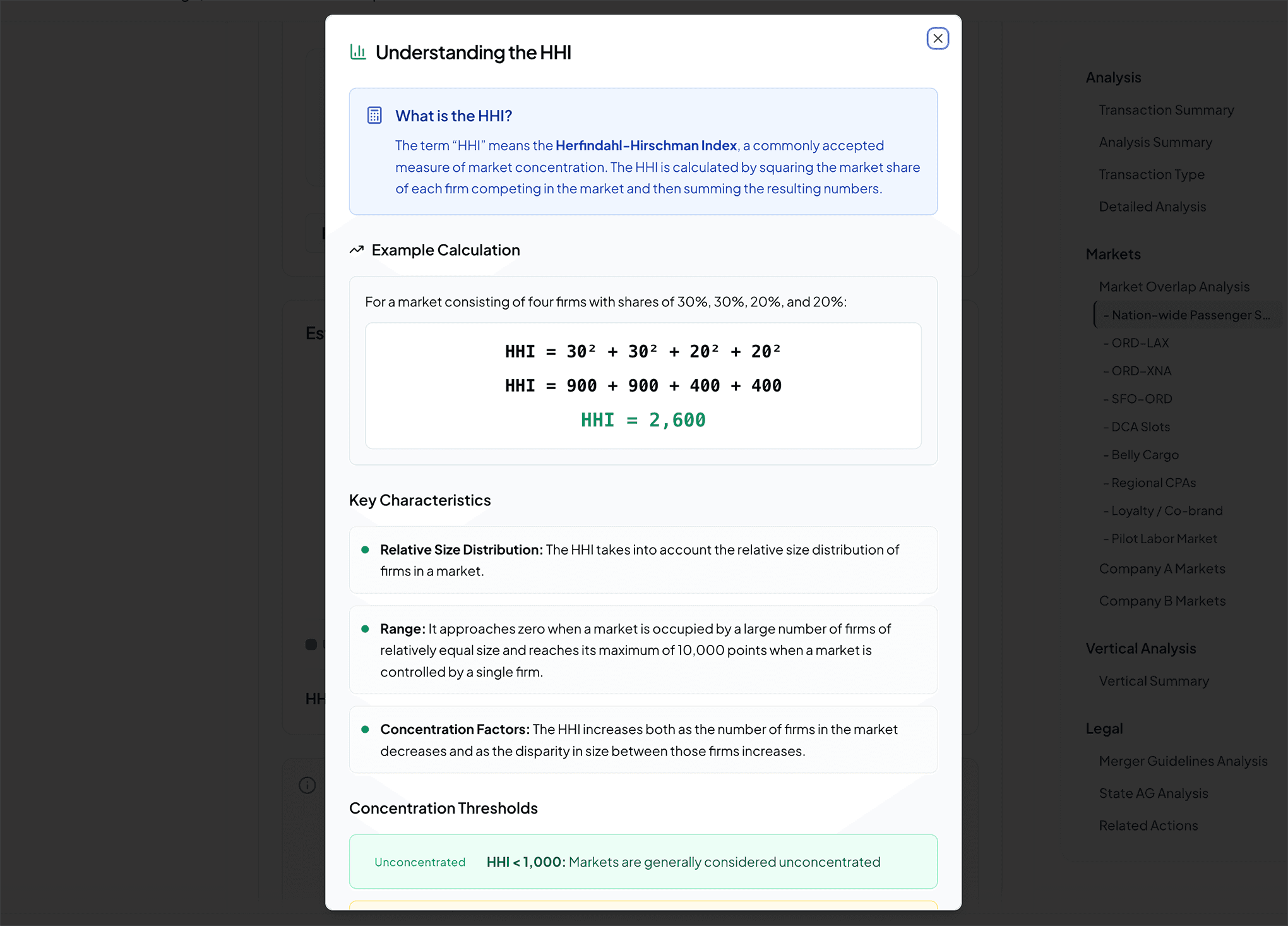

Flexibility where judgment matters; structure where auditability matters. Agents explore hypotheses the way a skilled researcher does, but checkpoints freeze conclusions into deterministic form: markets, HHI, vertical relationships, score components, citations.

Verification is continuous, not an afterthought. Citation-checking agents confirm text supports claims. Reasoning-consistency checks compare conclusions across agents. Unsupported claims are flagged. Stages cross-validate before the report closes.

Multi-stage orchestration

Parallel where speed helps; sequential where dependency requires it; extra agents spawned when vertical exposure or market count demands depth.

Models in tension

Generation and critique as separate passes, so confident language cannot substitute for support.

Verification throughout

Citations checked against sources; consistency across agents; flags where proof is thin.

Prompted with the details practitioners actually use

ChatGPT, Claude, and Gemini do not arrive with a working map of Brown Shoe factors, multi-layer overlap analysis, how state AGs allocate attention across industries, or what the current political moment implies for enforcement. They can approximate textbook language. They cannot reliably apply the judgment that comes from twenty years inside investigations and court filings.

Taimet's prompting layer encodes how Gwendolyn J. Lindsay Cooley, Taimet's Founder and a former Wisconsin Assistant Attorney General for Antitrust, actually reasons through a deal: the same perspective that informed the states' comments on the 2023 Merger Guidelines.

The output is not merely faster prose. It is a different kind of object: structured, sourced, and tuned to how mergers are reviewed in the real world, not how a model imagines they might be.

Pharmaceutical market definition

Small-molecule drugs are in the same market only if they share an identical molecular structure and are AB-rated for each other. Biologics are in the same market only if they’re biosimilars and treat the same disease. The distinction determines whether a pharma deal looks like a horizontal overlap or a non-issue. Most analysts don’t know it. Taimet does - and applies it automatically.

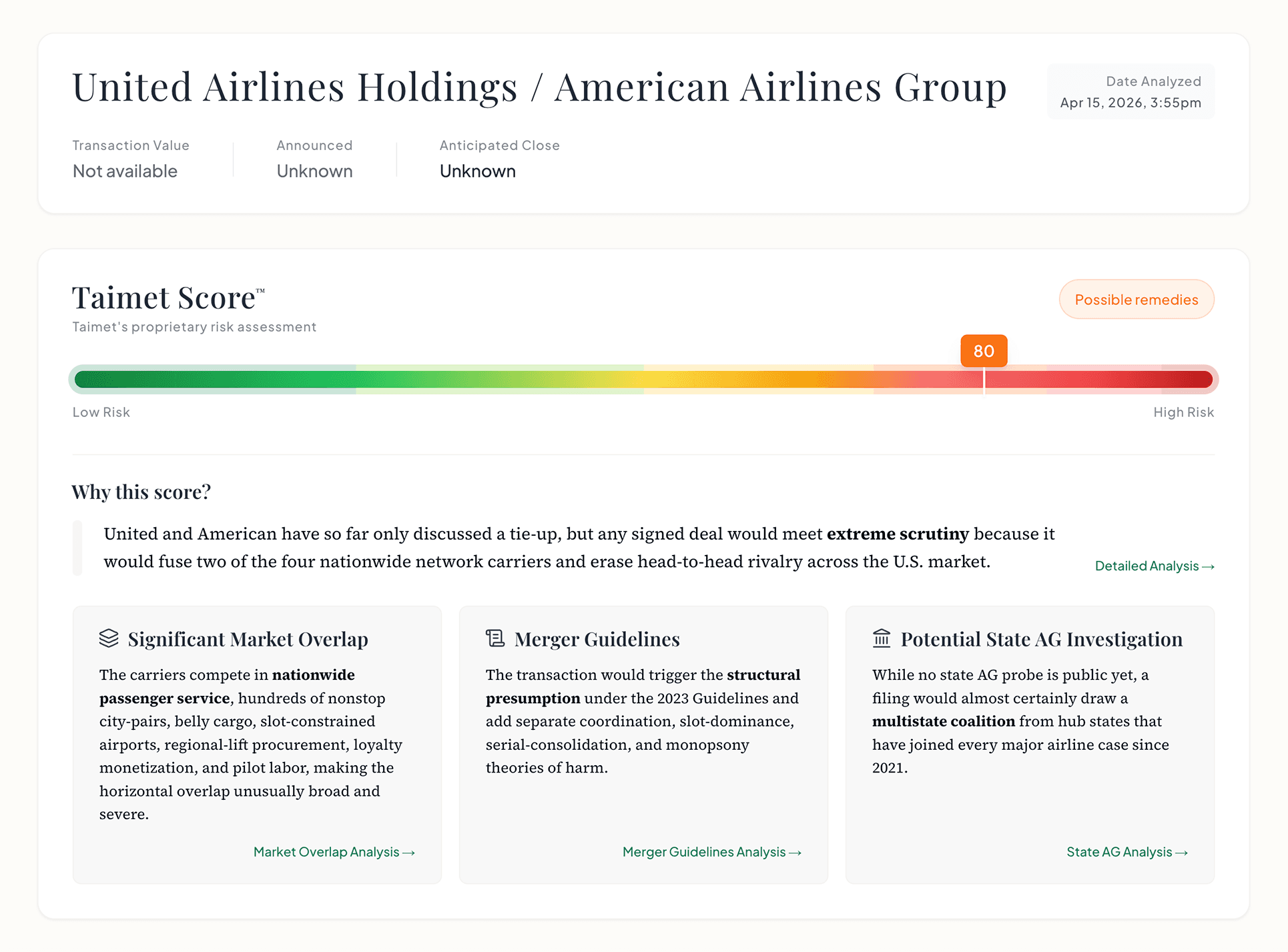

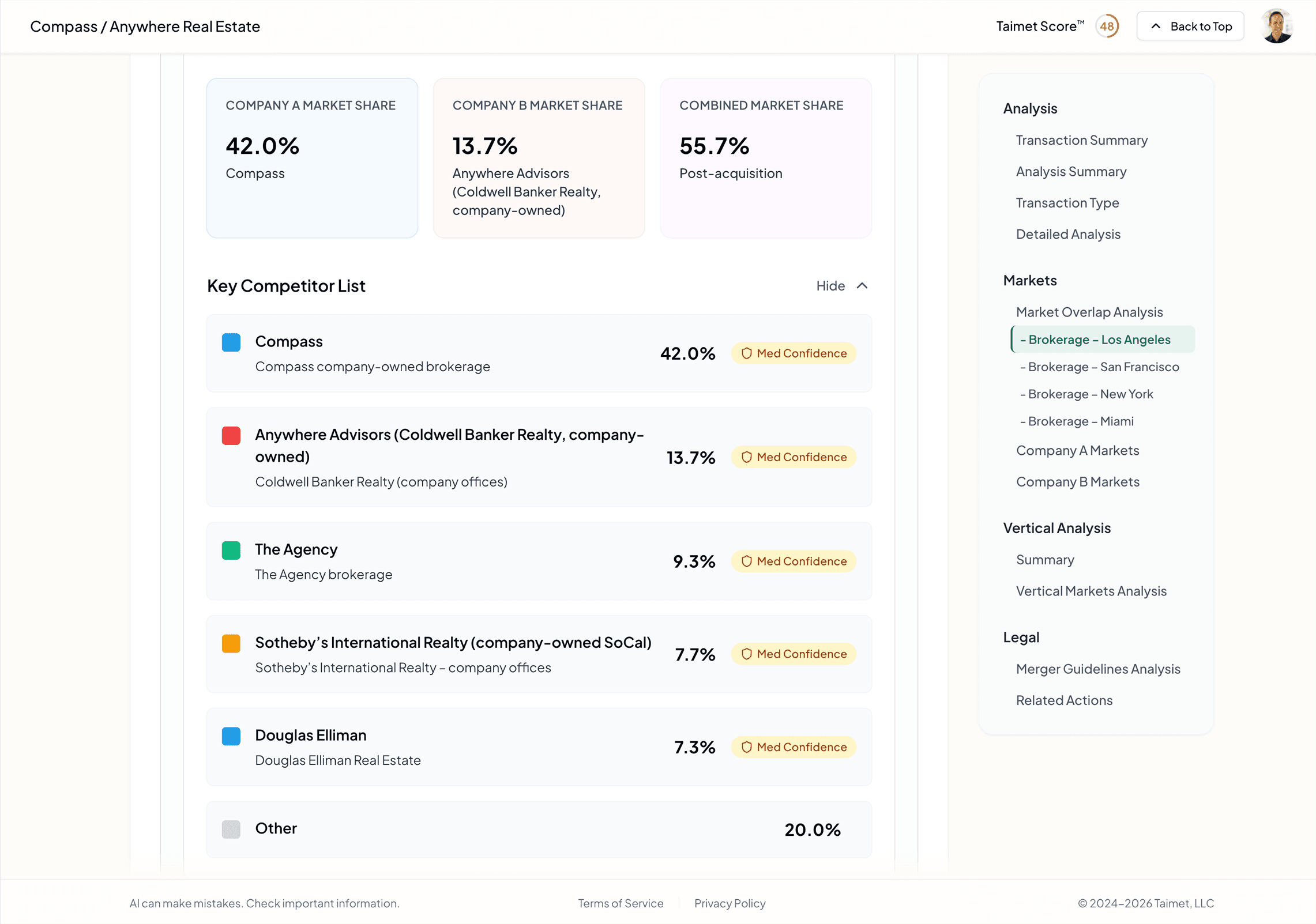

State enforcement patterns

Different state attorneys general challenge different kinds of mergers. Some are aggressive on hospital consolidation. Others on agriculture. Others on tech. Taimet knows which jurisdictions are likely to act on which transactions, which state-level enforcement theories apply, and which mergers will draw multistate coalitions.

Where the evidence actually lives

Pricing-power evidence often surfaces in investor presentations. Foreclosure intent shows up in board materials. Public statements about competitive intent surface in regulatory filings before they show up in press releases. Taimet knows where to look - because the person who designed it spent two decades looking there.

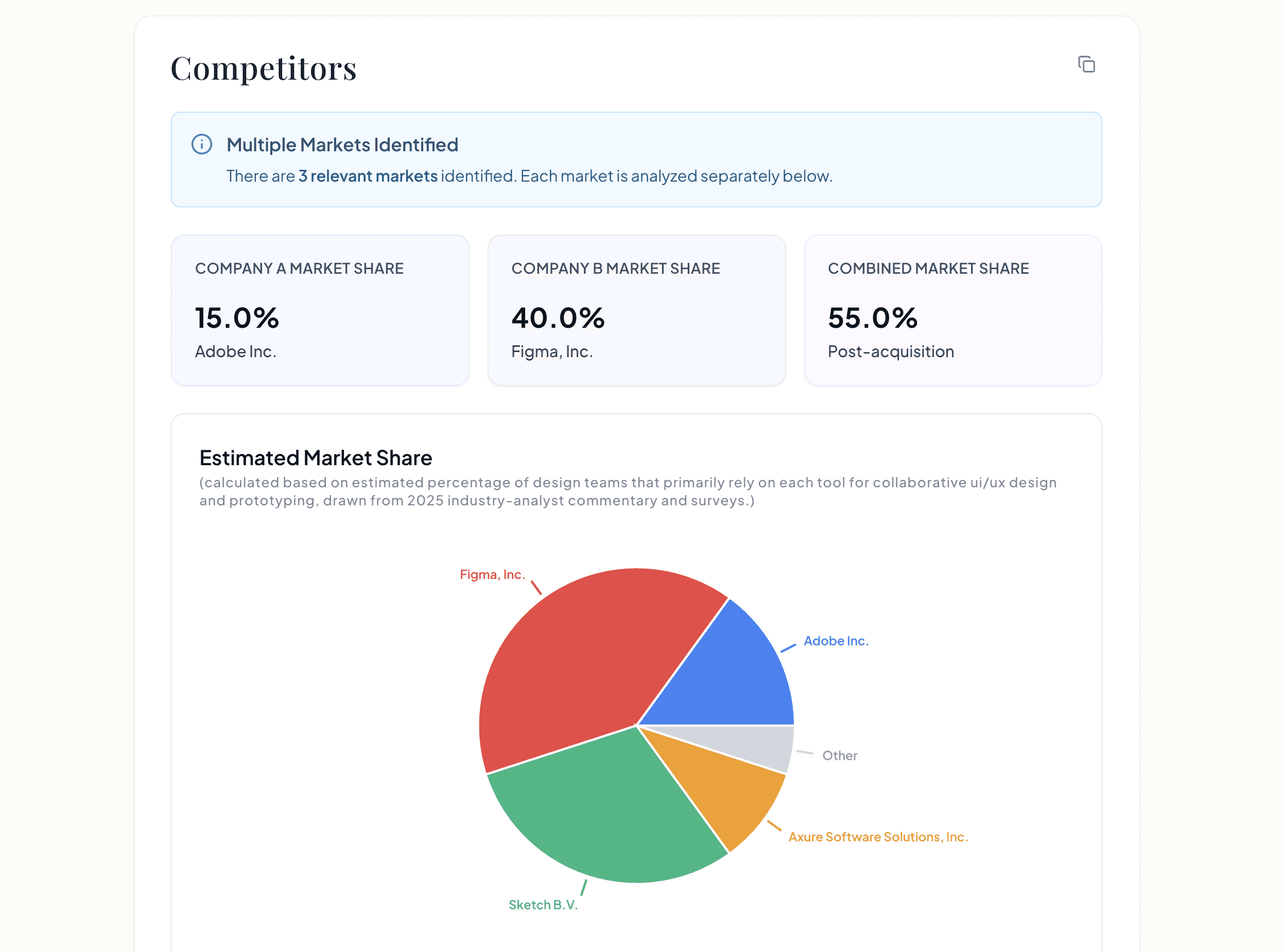

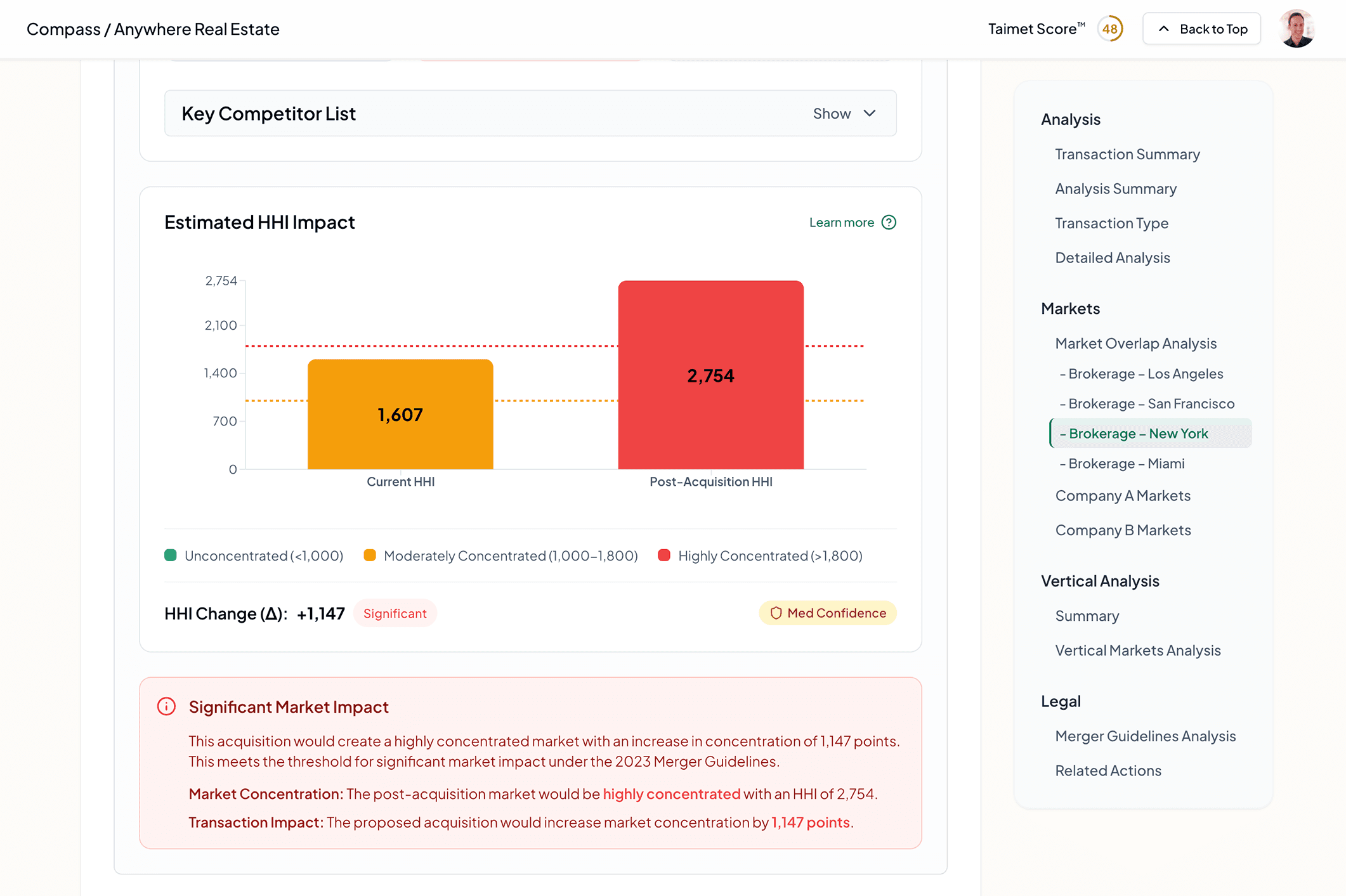

A thread versus an analysis

Ask a general-purpose model to “analyze this merger for antitrust risk,” and you will generally receive paragraphs of broad observations. Taimet produces a structured report: score, overlap, vertical theory, state AG lens, guidelines application, related conduct, and cited sources, written for people who need to act or defend a position.

Typical chat output

Here is a high-level overview of potential antitrust considerations for the proposed transaction. Merging parties should expect regulators to review horizontal overlaps and may assess vertical relationships where applicable. Market definition can significantly affect the analysis. Parties may consider efficiencies and potential remedies if concerns arise.

State enforcers sometimes take an interest in transactions affecting local markets. Political dynamics may influence timing. Counsel should monitor developments closely and prepare responsive arguments as needed.

No systematic citation verification, no score, no market list, no HHI, no structured vertical pass, no verified thread through the guidelines.

Taimet output

- -Detailed overlap at multiple levels of product and geographic breadth

- -Vertical analysis: foreclosure, competitively sensitive information, dual entry where relevant

- -State AG posture and real-world political context

- -Merger Guidelines application with sourced support where verifiable

Deeper research, not wider searching

A fair objection: Taimet works with exclusively public information. In principle, any AI tool with browsing could read some of the same pages.

The difference is not raw access. It is what happens after. A general-purpose “deep research” flow is still one agent following one storyline. It samples what looks salient, moves on, and synthesizes a readable summary. Useful for orientation. Insufficient when the task is to stress-test a transaction the way an enforcement team would.

Taimet runs many targeted research passes, each prompted with expert specificity about what to look for and where it tends to appear: companies, overlaps at varying market definitions, vertical links, state posture, political context, prior conduct, related actions, and more. Parallel results are reconciled by downstream agents that know how the pieces fit.

The same public record. Fundamentally different research.

Your prompts are not our training set

Consumer and many team plans for ChatGPT, Claude, and similar products may use chats to improve models unless you have opted out or hold an enterprise contract. Fine for casual work. Fraught when the subject is a live transaction.

No model training on your work

Taimet does not train foundation models on your analyses. Your outputs are not fodder for a shared weights update.

Names only

To run an analysis we need the merging companies. No uploads, no deal room, no party decks. That is intentional for security, compliance, and anti-gaming.

Built with public data

For investors, that means the analysis itself does not introduce MNPI. For counsel and agencies, it means speed without confidentiality negotiations just to get a first read.

How the approaches compare

Including ChatGPT, Claude, Gemini, and similar chat products: impressive general reasoning, not a substitute for an antitrust-grade pipeline.

Consultants

ChatGPT / Claude

Taimet

The solution is the system, not the chat box

Taimet is not trying to replace ChatGPT or Claude. We deploy models like them inside an architecture purpose-built for merger antitrust: expert-encoded reasoning, deterministic checkpoints, verification, and a report format professionals can use.

For event-driven investors, that means a forward view on what enforcers are likely to do, grounded in public data and checkable sources. For state enforcers, it means compressing days of file-building into minutes without waiting on waivers. For law firms and corporate teams, it means a first pass associates can actually explain.

These models are remarkable tools. Taimet is the system that makes them useful for the work that actually matters.

Ready to see the difference?

Run your first analysis in minutes.